We propose a method for editing images from human instructions: given an input image and a written instruction that tells the model what to do, our model follows these instructions to edit the image. To obtain training data for this problem, we combine the knowledge of two large pretrained models---a language model (GPT-3) and a text-to-image model (Stable Diffusion)---to generate a large dataset of image editing examples. Our conditional diffusion model, InstructPix2Pix, is trained on our generated data, and generalizes to real images and user-written instructions at inference time. Since it performs edits in the forward pass and does not require per-example fine-tuning or inversion, our model edits images quickly, in a matter of seconds. We show compelling editing results for a diverse collection of input images and written instructions.

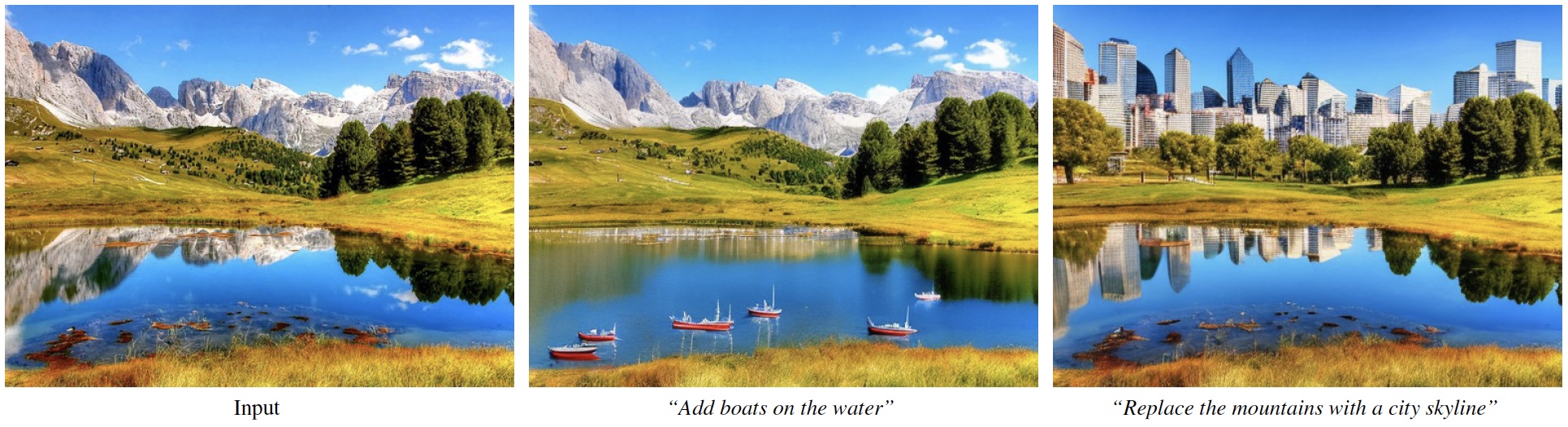

Note that isolated changes also bring along

accompanying contextual effects: the addition of boats also adds wind ripples in the water, and the added

city skyline is reflected on the lake. (source)

Note that isolated changes also bring along

accompanying contextual effects: the addition of boats also adds wind ripples in the water, and the added

city skyline is reflected on the lake. (source)

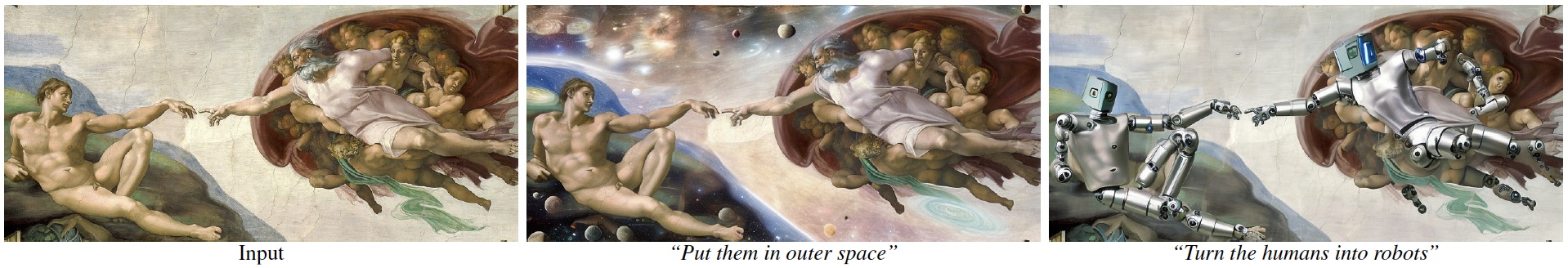

Despite being trained at 256x256 resolution, our model can perform realistic edits images up to 768-width resolution.

Despite being trained at 256x256 resolution, our model can perform realistic edits images up to 768-width resolution.

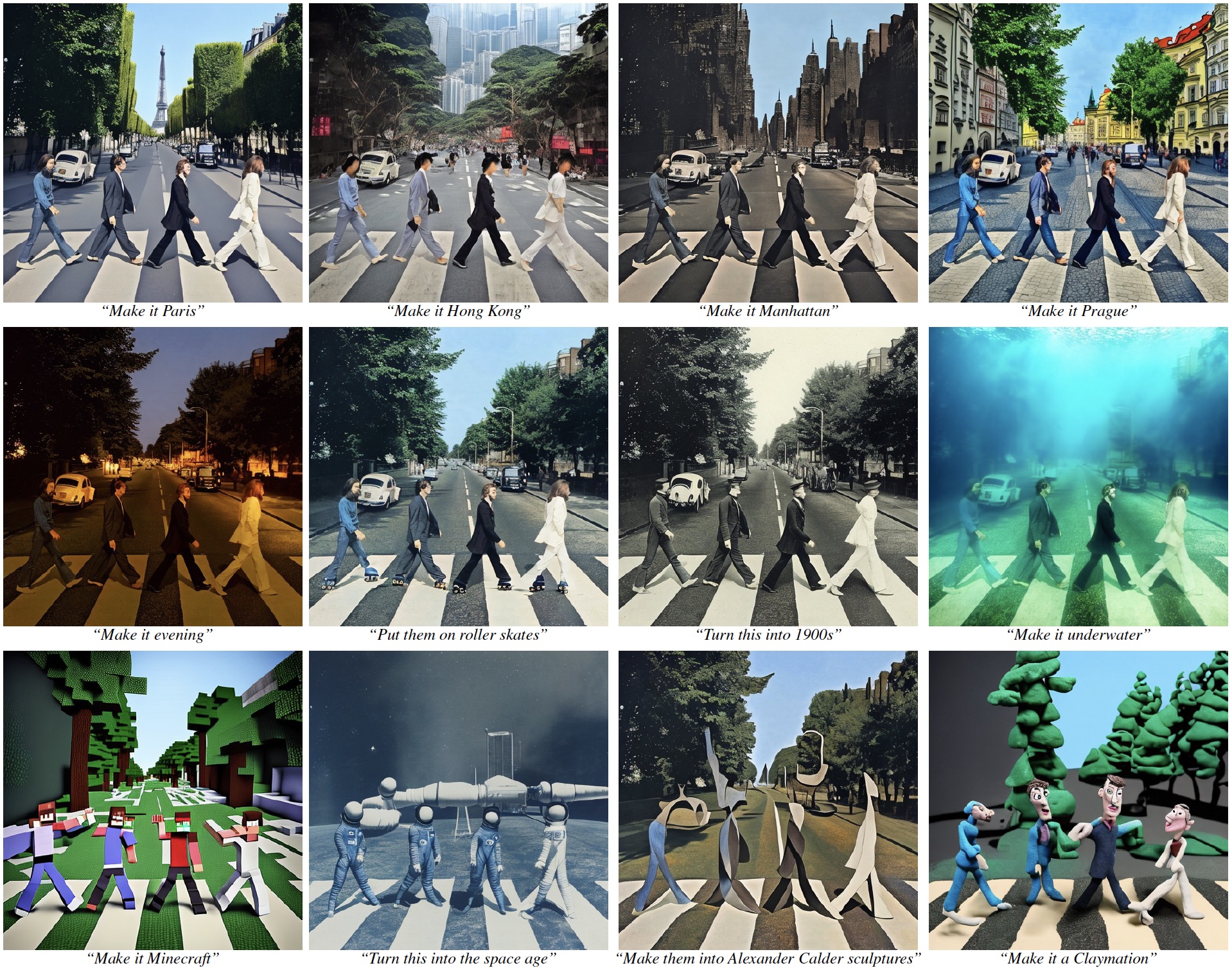

A single image (in this case, the iconic Beatles Abbey Road album cover) can be transformed in a large variety of ways.

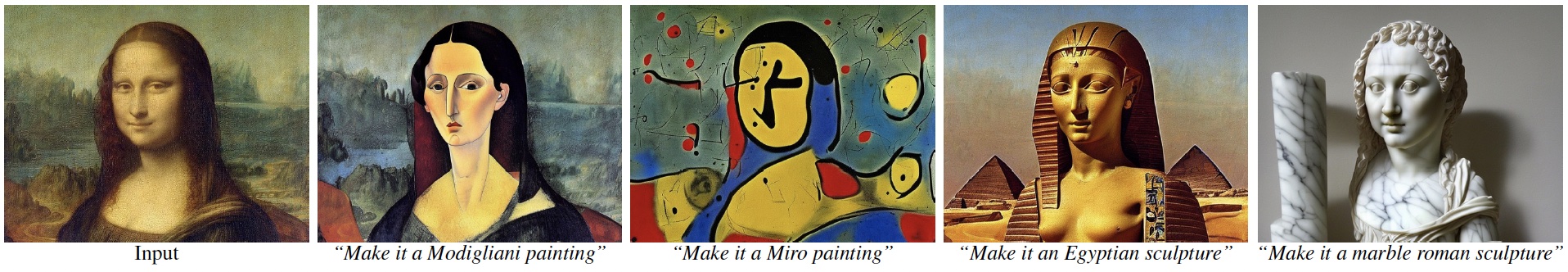

A single image (in this case, the iconic Beatles Abbey Road album cover) can be transformed in a large variety of ways. Leonardo da Vinci's Mona Lisa transformed into various artistic mediums.

Leonardo da Vinci's Mona Lisa transformed into various artistic mediums.

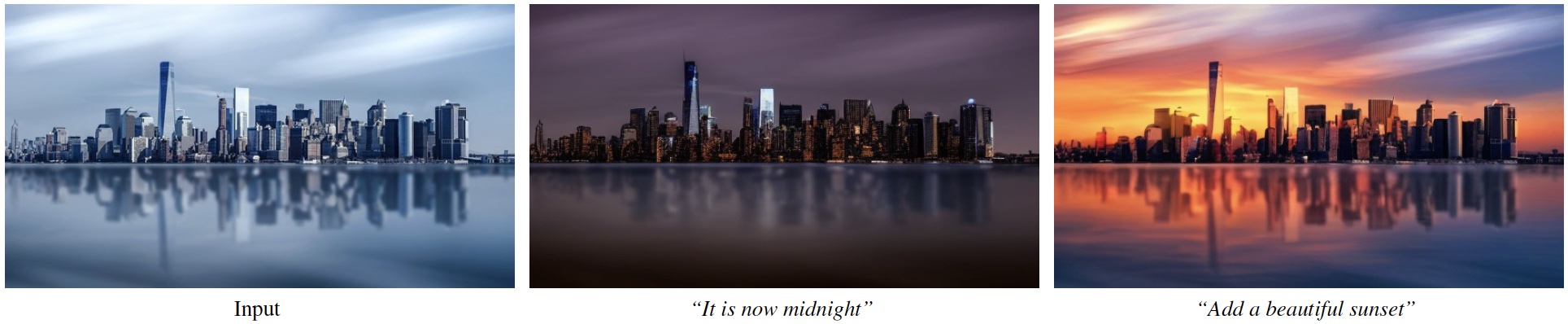

A photograph of a cityscape edited to show different times of day (source).

A photograph of a cityscape edited to show different times of day (source).

Vermeer's Girl with a Pearl Earring with a variety of edits.

Vermeer's Girl with a Pearl Earring with a variety of edits.

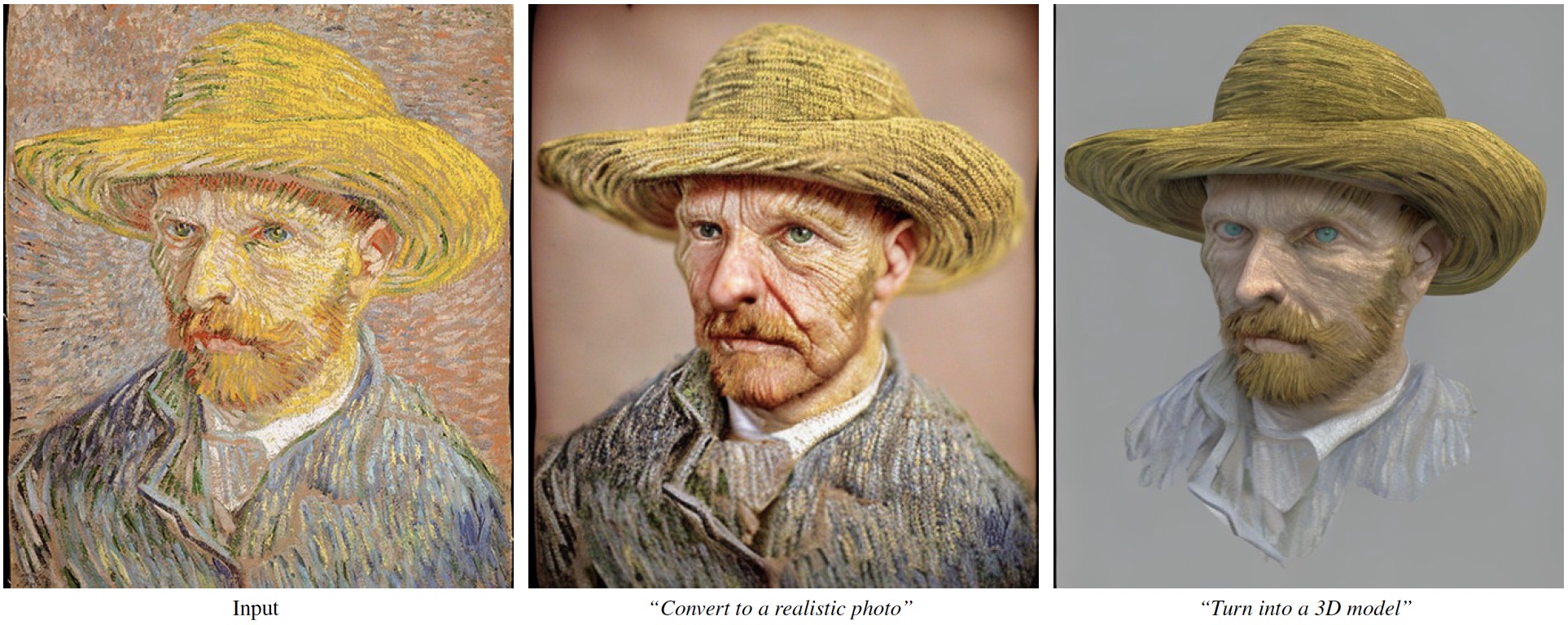

Van Gogh's Self-Portrait with a Straw Hat in different mediums.

Van Gogh's Self-Portrait with a Straw Hat in different mediums.

Leighton's Lady in a Garden moved to a new setting.

Leighton's Lady in a Garden moved to a new setting.

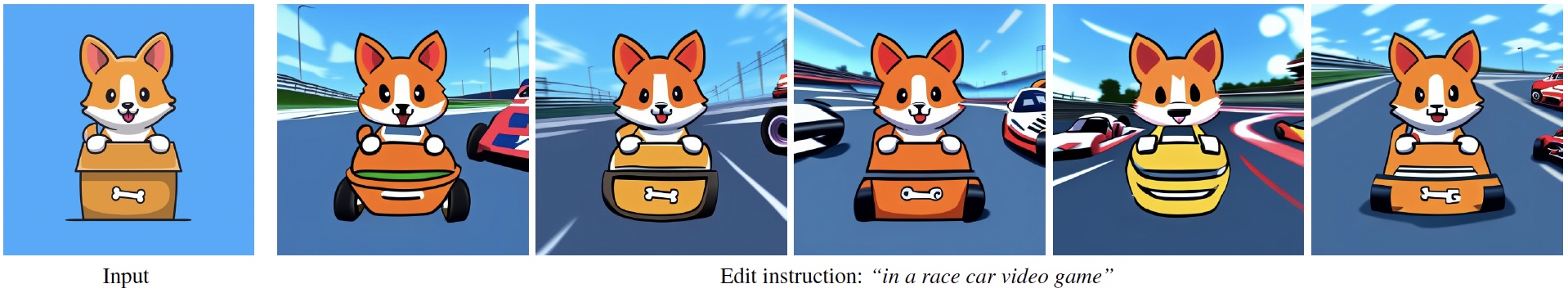

By varying the latent noise, our model can produce many possible image edits for the same input image and

instruction.

By varying the latent noise, our model can produce many possible image edits for the same input image and

instruction.

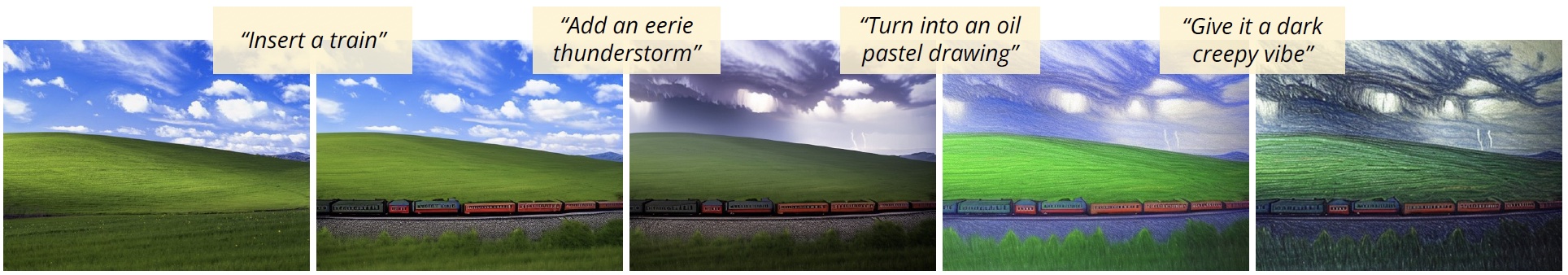

Applying our model recurrently with different instructions results in compounded edits.

Applying our model recurrently with different instructions results in compounded edits.

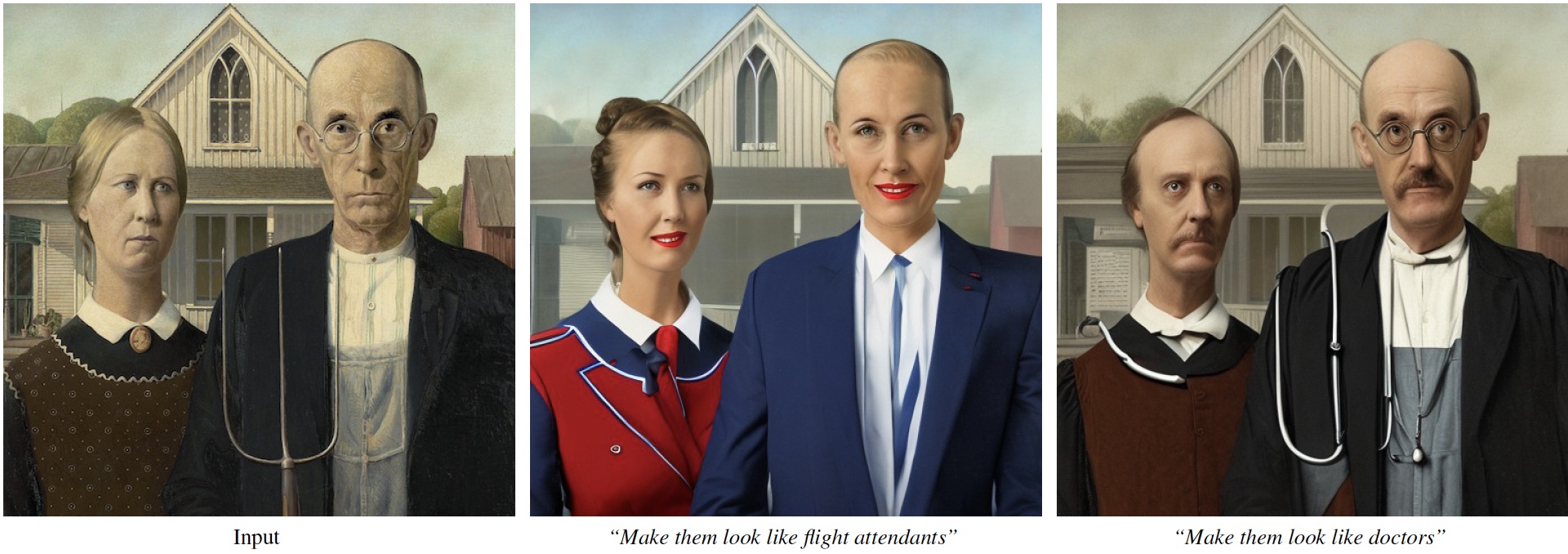

Our method reflects biases from the data and models it is based upon, such as correlations between

profession and gender.

Our method reflects biases from the data and models it is based upon, such as correlations between

profession and gender.

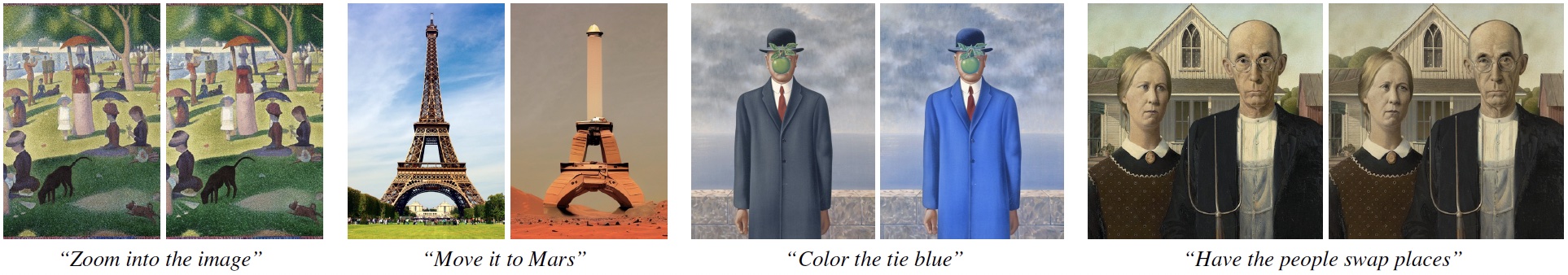

Failure cases. Left to right: our model is not capable of performing viewpoint changes, can make undesired

excessive changes to the image, can sometimes fail to isolate the specified object, and has difficulty

reorganizing or swapping objects with each other.

Failure cases. Left to right: our model is not capable of performing viewpoint changes, can make undesired

excessive changes to the image, can sometimes fail to isolate the specified object, and has difficulty

reorganizing or swapping objects with each other.

@InProceedings{brooks2022instructpix2pix,

author = {Brooks, Tim and Holynski, Aleksander and Efros, Alexei A.},

title = {InstructPix2Pix: Learning to Follow Image Editing Instructions},

booktitle = {CVPR},

year = {2023},

}

We thank Ilija Radosavovic, William Peebles, Allan Jabri, Dave Epstein, Kfir Aberman, Amanda Buster, and David Salesin. Tim Brooks is funded by an NSF Graduate Research Fellowship. Additional funding by a research grant from SAP and a gift from Google.

Website adapted from the following template.